Original link: 从ChatGPT到AI Agent,一文讲透 Agent 的底层逻辑

TL;DR & Reading Guide

This article stems from a year and a half of practical AI development and a strong realization gained from intensive discussions with numerous teams over the past two months since my departure. I’ve found that when discussing Agents, we often fall into two traps: some mystify them, viewing them as all-powerful; others over-simplify them, dismissing them as "just calling ChatGPT a few more times."

This cognitive misalignment, which arises from a lack of practical experience with and understanding of the agentic loop process, leads to very high communication costs.

Therefore, I’ve written this long-form article to help practitioners like us establish a foundational intuition and consensus about Agents: The qualitative leap in AI Agent capability is not solely due to the increasing intelligence of the underlying Large Language Model (LLM); the more crucial factor is the design of the effective "cognitive workflow" that we build around the model.

This extensive article—nearly 10,000 words—is dedicated to building that intuition and completely deconstructing this "workflow." Use this guide to quickly navigate to the sections that interest you:

-

Part One (0x01 & 0x02): Building an Intuitive Understanding

- Here, I use the analogy of "The Five Growth Stages of a Top Student" to describe the evolution of the Agent's core capabilities.

- We'll analyze the widely used "Travel Planning" case study. Like a "standardized test," the comparison clearly shows the fundamental difference between a dynamic process and a one-shot generation.

-

Part Two (0x03 & 0x05): The Core for Developers

- 0x03 is the technical core of this article. It breaks down the triple value delivered by the "workflow": how to use "Structure" to scaffold thinking, how to use "Iteration" to build a compression algorithm for memory, and how to use "Interaction" to connect the model to the real world.

- 0x05 discusses our role change—from "Prompt Engineer" to "Agent Workflow Architect"—and explores Agent performance engineering and the future direction of architectural evolution.

-

Part Three (0x04): Tracing the Theoretical Roots

- Finally, if you're curious why the

Think -> Act -> Observeloop is fundamentally effective, 0x04 reveals its underlying scientific bedrock, which has deep ties to classical Cybernetics and Information Theory.

- Finally, if you're curious why the

I hope this article provides a solid starting point for our future discussions and collaborations.

0x00 If You Could Take the College Entrance Exam Again

Over the past few months, I've talked to countless developers about AI Agents. A common confusion is that while many know about the abstract Think -> Act -> Observe loop, they can't truly feel its power. They ask:

"Isn't this just me having a multi-turn chat with ChatGPT? Why does automating it lead to a qualitative leap?"

To answer this, let's revisit a universally familiar scenario.

A potentially tough question: If time rewound, and you took the same exam again the day after finishing it, would your score be higher?

Many who regretted their original score would instantly say yes:

- "Right after the test, I realized I could have easily solved that complex analytical geometry problem using a 'graphical approach' instead of the algebraic one I got stuck on."

- "I was so nervous that I thought 'B' for a multiple-choice question but bubbled 'C,' and didn't catch it during the final check."

- "I just managed my time poorly! I had the full, correct logic for the last physics problem on my scratch paper but ran out of time to transfer it to the answer sheet!"

With just these minor adjustments, improving your score by dozens of points isn't impossible.

In that single day, your core knowledge base—built over twelve years of hard study—didn't change qualitatively. This is like today's Large Language Models (LLMs): their core knowledge is "frozen" the moment training completes, forming a vast but static knowledge base.

So, where does the score improvement come from?

The answer is the very core of understanding the AI Agent: The Agent's power doesn't come from another leap in the model's intelligence, but from the massive improvement in the "cognitive workflow" we equip it with.

This "workflow" is analogous to the exam strategies you learn: the "easy-first, hard-later" time allocation, the mandatory "final review" habit before submission, and the adaptability to "switch approaches" when a problem becomes intractable.

To understand how this workflow is built step-by-step in AI, let's follow the growth story of "Xiao Ming," a top student.

0x01 An Analogy for the Agent's Core: The Five Growth Stages of a Top Student

Xiao Ming's growth is an evolution of constantly optimizing his "problem-solving process."

Stage 1: The Raw Talent

Xiao Ming is naturally gifted and thinks incredibly fast. For math problems, he's overly confident, doing all calculations mentally. During exams, he's always the first to slap his paper on the desk and casually walk out. His score? Don't ask; just focus on the "speed!" He finishes the test, but he never knows how many computational errors resulted from skipping steps or how many fundamental mistakes were made due to careless reading.

This perfectly mirrors our earliest usage of Large Language Models (LLMs): The Basic API Call. We toss a problem to it, and it generates a one-shot, black-box answer. The answer looks complete, even eloquent, but how was it derived? Are there any factual errors? We have no clue. The AI at this stage is like Xiao Ming, who prioritizes speed, delivering a result without guaranteeing its reliability.

Stage 2: The Thinker

After losing points due to carelessness a few times, the teacher mandated a rule: all problems must have detailed steps written on scratch paper. Xiao Ming reluctantly complied but found that his accuracy soared. By "externalizing" his thought process on paper, he could easily spot minor flaws in his logical chain.

[Core Concept: Chain of Thought (CoT)]

CoT is the first and most fundamental structured thinking process. Its core function is to force the model to decompose a complex, one-shot "guessing" task into a series of simple, linear "reasoning" subtasks. It acts as a rein, pulling back the wild horse of thought trying to "skip steps," forcing it to focus on the current step, using the conclusion of the previous step to deduce the next, which drastically reduces the probability of the model hallucinating.

Wei, J., Wang, X., Schuurmans, D., Bosma, M., Chi, E., Le, Q., & Zhou, D. (2022). Chain-of-Thought Prompting Elicits Reasoning in Large Language Models.

Stage 3: The Meticulous Reviewer

Xiao Ming advanced further. He not only drafts his solutions but has learned a new skill. When he finishes early, with ten minutes left in the exam, he triggers a new "mental mode": the final review. At this moment, he stops being the "solver" and becomes a harsh "reviewer," critically examining his answers, actively seeking possible mistakes, and correcting them.

[Core Concept: Self-Reflection]

The essence of the Reflexion framework is to introduce a self-iterative process for the Agent: 'act first, then review, then correct.' After completing a task, the Agent doesn't rush to output the final result; instead, it generates a "review note" on the execution process. In the next attempt, this "error log" information is included as a crucial reference in the context to guide the Agent to avoid repeating past mistakes.

Crucial data shows that through this iterative process, Reflexion achieved 91% accuracy on the HumanEval code generation task, surpassing GPT-4's 80% at the time. This unequivocally demonstrates the immense leverage of "process" optimization.

Shinn, N., Cassano, F., Gopinath, A., Narasimhan, K., & Yao, S. (2023). Reflexion: Language Agents with Verbal Reinforcement Learning.

Stage 4: The Strategist

As exam difficulty increased, Xiao Ming realized that problem-solving skill and meticulousness weren't enough. In the first minute of receiving the paper, he stopped rushing to solve problems and instead quickly scanned the whole test to form a battle plan: which easy questions to secure first, which tough ones to save for last, and how to allocate time for each section. If a problem took longer than anticipated, he would dynamically adjust the plan.

[Core Concept: Planning]

This is a higher-level macro-process design. When tackling a complex task, an Agent with planning ability no longer takes things step-by-step, but first breaks down the grand goal into a series of logically clear subtasks, forming a plan checklist. This checklist is its blueprint for action, ensuring that every step serves the ultimate goal, significantly improving execution efficiency and certainty. This is a core component of most advanced Agent frameworks.

Stage 5: The Scholar

Later, Xiao Ming entered university and began real research. His task was no longer to answer standardized questions but to explore an open-ended frontier topic, such as "Analyze the penetration rate changes in China's new energy vehicle market in recent years."

The textbook knowledge in his head (the model's static weights) was clearly outdated for this topic. So, he initiated a brand-new working mode, which is the perfect embodiment of the Agent's most powerful Tool Use capability. The core of this isn't the tool itself, but the milestone workflow revealed by the ReAct framework, which deeply binds thinking and action: Thought -> Act -> Observe.

First, he Thinks: "My knowledge base only has old data from a few years ago and cannot directly answer this question. I must acquire the latest industry reports and data."

This thought drives him to Act. Instead of guessing, he chooses a tool—walking to the library, opening academic and industry databases, and searching for keywords. This corresponds to the Agent calling search_api("China NEV 2023-2025 Market Penetration Report").

Next, he Observes the result of the action: several recent reports and data charts appear on the screen. This is new information from the external world—objective facts—that he previously did not know.

Finally, armed with this observed new knowledge, he returns to the Think stage: "The latest data shows XX% penetration, driven mainly by Tier-2 cities, and a specific technology innovation is a key variable. Now, I can base my analysis and argument on these facts."

This loop transforms the AI from a closed "brain" into an "actor" capable of continuous interaction with the real world. Through tools, it breaks the limitations of its internal knowledge, using real-time, factual information to calibrate and iterate its thinking, thereby raising the quality and reliability of its output to a new level.

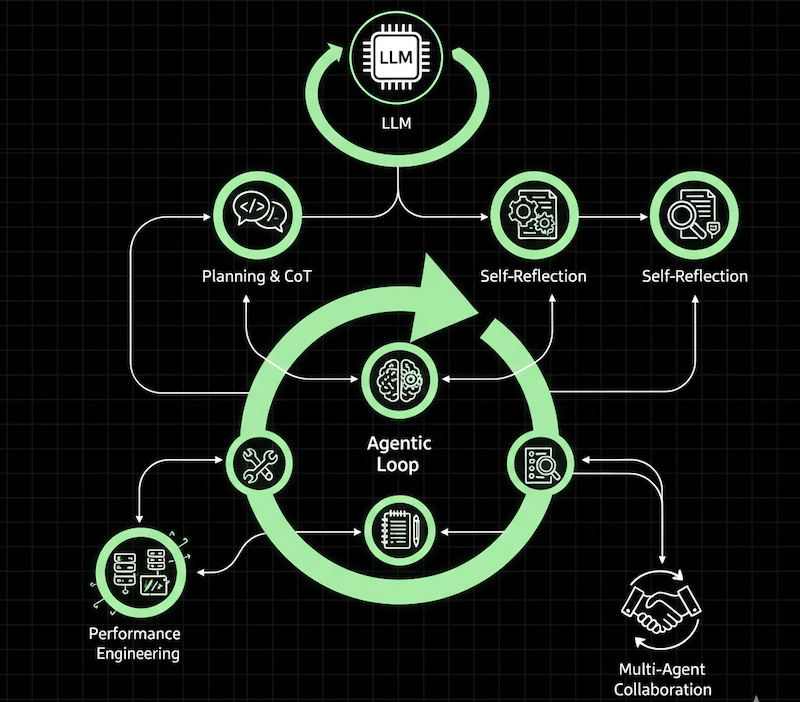

This completes Xiao Ming's evolution. He transitioned from an "Unrefined Genius" relying solely on talent to a scholar capable of planning, reflection, and using tools to solve complex, open-ended problems. The capabilities he acquired along the way—drafting (CoT), reviewing (Self-Reflection), strategic planning (Planning), and resource lookup (Tool Use)—are the core components that constitute a powerful AI Agent.

This is the complete evolution path from Chatbot to Agent.

[Core Concept: Tool Use and the ReAct Framework]

The brilliance of the ReAct framework is not the tool itself, but that it defines an interactive workflow that deeply binds 'Thinking' and 'Acting':

Thought -> Act -> Observe.This loop transforms the AI from a closed "brain" into an "actor" capable of continuous interaction with the real world. Through tools, it breaks the limitations of its internal knowledge, using real-time, factual information to calibrate and iterate its thinking, thereby raising the quality and reliability of its output to a new level.

Yao, S., Zhao, J., Yu, D., Du, N., Shafran, I., Narasimhan, K., & Cao, Y. (2022). ReAct: Synergizing Reasoning and Acting in Language Models.

0x02 From an "Elegant Monologue" to "Reliable Action"

Having discussed Xiao Ming's evolution, let's return to the travel planning example that every Agent demo uses and re-examine, through our new lens, what exactly makes the Agent superior.

"Help me plan a weekend trip for three people in Beijing, including the Forbidden City and a suitable science museum for a child, and provide an estimated total budget."

(Yes, I know, it’s the same overused travel planning example. We can't help but use it here for a 'public execution' because it is so classic and so illustrative.)

The Chatbot's Answer: A "Knowledgeable but Forgetful" Secretary

You pose this question to ChatGPT. Like Xiao Ming in his earliest stage, it generates a seemingly perfect guide without hesitation:

**Beijing Family Weekend Exploration Trip** ✈️

Hello! I'm happy to plan this wonderful trip to Beijing for you. Here is the itinerary:

**Saturday: History and Culture Immersion** 🏰

- Morning: Visit the Forbidden City (Palace Museum) to experience the imperial grandeur. Suggest booking tickets on the official website in advance, tickets are ¥60/person.

- Afternoon: Head to the "Beijing Children's Science Exploration Center" in Xicheng District, which features many interactive exhibits perfect for children.

- Evening: ...

**Budget Estimate:** 💰

- Tickets: (¥60/Forbidden City + ¥50/Science Center) x 3 people = ¥330

- Accommodation: ...

- Dining: ...

- **Total: Approximately ¥1,500**

The guide is perfectly formatted, eloquently written, warmly toned, and even uses emojis. It looks exactly like any travel post you'd scroll past on social media.

However, a closer inspection of this "guide" reveals it to be superficial and full of holes:

- Outdated Information (Hallucination): The ticket price for the Forbidden City is likely from a stale webpage in its training data. It has no way of knowing that, during the current peak season, the reservation method has changed completely.

- Fabricated Information (Hallucination): The "Beijing Children's Science Exploration Center" sounds great, but if you actually search for it, you'll find it does not exist. The model statistically "created" the most likely combination based on keywords like "Beijing," "Children," and "Science Museum."

- Lack of Executability: The "¥1,500" budget is a vague figure. You cannot trust it, nor can you base any decision on it.

This is the essence of a Chatbot: a "Statistically Elegant Text Imitation." Because it executes a static, one-shot generation process, it has no mechanism to verify, reflect, or interact with reality.

The AI Agent's Answer: A "Reliable but Slightly Stiff" Doer

Now, we give the same task to an AI Agent. You won't immediately get a guide mixed with emojis. Instead, if you look at its detailed execution log, you'll see a dynamic, goal-oriented action unfolding.

It will, like a real project manager, stream its "inner monologue" and "actions":

Think (Planning): "Task received. Step 1, create a plan: 1. Verify Forbidden City ticket status. 2. Search for a genuinely existing, child-friendly science museum in Beijing. 3. Query exact prices and opening hours for all locations. 4. Consolidate a precise budget. 5. Generate the final itinerary."

(See? This is the 'Planning' process from Chapter 1 kicking in, establishing the macro-execution framework for the entire task.)

Think: "Executing Step 1 of the plan: Verify Forbidden City tickets."

Act:

search_api("Forbidden City official website booking")Observe: "Official website alert: Tickets for this week are sold out. Advance booking one week prior is required."

Think: "Plan Blocked! Forbidden City is inaccessible. This conflicts with the user's core requirement, so the plan must be dynamically adjusted (Re-planning). New Plan: 1. Clearly inform the user that Forbidden City tickets are sold out. 2. Proceed with the original plan's second step: search for a science museum and structure the new itinerary around it."

(This critical adjustment is the result of the 'Reflection' process being triggered, allowing the Agent to learn from failure.)

This Think -> Act -> Observe loop continues until all steps in the plan are completed. The final report it presents might be less "eloquent," but it is 100% fact-based and fully actionable:

**Beijing Family Weekend Trip Plan (Revised)**

Hello, during the real-time query for your itinerary, I found that [Forbidden City tickets for this week are completely sold out].

Therefore, I have re-planned a science-themed alternative for you:

**Core Itinerary:**

- **Location:** China Science and Technology Museum (Address: No. 5 Beichen East Road, Chaoyang District, Beijing)

- **Ticket Information:** Main exhibition hall adult ticket ¥30/person, student ticket ¥20/person. Assuming your child qualifies for a student ticket, the total ticket cost for three people is 30x2 + 20x1 = ¥80.

- ... (Followed by further queries for transport, dining, etc., leading to a precise budget)

At this point, the stark difference between a Chatbot and an Agent is self-evident.

The former looks beautiful, but you'd never dare use it on a crucial test because it's only replicating an answer; the latter's process might be slow and clunky, but it delivers a tangible result because it is executing a process to find the answer.

0x03 The Agent's Core Driver: Taming Model Power with "Process"

When many first encounter an Agent and open its execution log, they have a counter-intuitive confusion:

"Why does it feel so 'slow' and 'clumsy'? Checking one thing takes multiple turns. It’s far less 'smart' than me just asking ChatGPT and getting an instant answer!"

This "slowness" is precisely the first door to the Agent's power. It reveals a profound shift: we are moving from pursuing the one-shot, intuitive "Fast Thinking" of the LLM to designing a structured, verifiable "Slow Thinking" approach.

The core of this "Slow Thinking" is the Process we design for the AI.

The Agent's core driving force doesn't come from another explosion in the model's intelligence but from the workflow we design for it. Everything the developer does is essentially trading "more computational steps" and "longer thinking time" for something critically important in the real commercial world—the "high quality" and "certainty" of the result.

What exactly does this process bring to the Agent?

Value 1: Using "Structure" Against "Chaos"—Scaffolding Thought

First, we must admit that the LLM's native thinking is "diffuse" and "flat." It's like a knowledgeable but scatterbrained genius whose long-chain reasoning is very fragile when facing a truly complex task; it can easily "go astray" in a middle step or simply "forget" the original goal.

The processes we design—Planning, Chain of Thought (CoT), and even the more complex Tree of Thoughts (ToT)—are essentially forcing a "logical scaffold" onto the AI's messy thinking process.

- The Planning process acts as the architect's master blueprint. At the start of the task, it breaks down a goal too grand to be thought about in one go ("build a skyscraper") into a series of clear, ordered, executable steps ("Step 1: lay the foundation; Step 2: build the main structure; Step 3: top out..."). It ensures every AI action serves the final goal—this is using structure against chaos at the "macro" level.

- Chain of Thought (CoT) is more like the on-site worker's manual. It ensures the internal logic of each step is rigorous ("The rebar must be tied this way, the concrete must be C50 grade"). It compels the AI's reasoning to be tightly linked and incremental—this is using structure against chaos at the "micro" level.

The presence of this "scaffold" ensures the AI does not collapse mid-way due to scattered thoughts when tackling complex problems. It vastly raises the AI's ceiling for solving complex problems.

Value 2: Using "Iteration" Against "Forgetting"—A Compression Algorithm for Memory

The LLM's most fatal flaw is its limited "attention," which we commonly refer to as the Context Window. It's like a fish with a 7-second memory; it can neither process massive amounts of information at once nor remember long historical lessons.

The processes we design—Reflection, Summarization—are essentially custom-building an "efficient compression algorithm" for the AI's memory.

Let's return to the travel planning example. When the Agent learns through a tool that "Forbidden City tickets are sold out," the Reflexion process is triggered. What does it do?

It doesn't stuff all the verbose, raw information—all the HTML code, pop-up alerts, etc.—returned by the official website into its already precious "memory." If it did, its context window would quickly be filled with junk.

Instead, the process initiates "memory compression." It distills the failed interaction into a single, high-information-density conclusion and stores it as an "experience" in its memory:

"Experience: Forbidden City tickets require one-week advance booking; not feasible this week."

This process is a lossy but extremely effective compression. It discards 99% of irrelevant detail yet preserves 100% of the information critical for future decisions, all within a tiny context space. It is this small "experience note" that allows the AI to "learn from its mistakes" and achieve genuine learning and iteration in subsequent planning.

This "compression algorithm" gives the AI a dynamically evolving "long-term memory," allowing it to process longer timelines and complete more complex tasks within a finite attention span.

Value 3: Using "Interaction" Against "Vaporware"—Connecting the Model to the Real World

No matter how sophisticated a process is internally designed, if it is completely isolated from the real world, it is simply "spinning its wheels." All its outputs might be logically perfect castles in the air, built solely on the model's internal "hallucinations."

Therefore, the process must have the ability to interact with the world. This is where we logically introduce the Tool.

A Tool is not a concept parallel to the Process. It's more like the "neural tentacles" at the end of the Process's "central nervous system."

- The

ReActframework is brilliant not because it's a tool framework, but because it's a process that deeply binds "Thinking" and "Acting (Tool Invocation)." Driven by this process, if the AI realizes during a thought that "my internal knowledge is insufficient to support the next decision," it will proactively extend thesearch_api()"tentacle," genuinely "touch" the internet, and then feed the sensed "temperature" (i.e., objective facts) back to the brain to continue the next step of thinking.

This mechanism ensures that our strategy of "trading time for quality" ultimately delivers "genuine quality," not just "high-quality hallucinations." The Tool, as an extension of the process, expands the boundary of the AI's capability.

Conclusion: Redefining Context

With this understanding, we can gain a fresh perspective on the trendy term "Context Engineering."

Context is not a target we need to "stuff"; it is the result "produced" by the excellent process we design. In the face of the core constraint—the LLM's limited attention window—a good process is inherently a "Context Architect." It combats forgetting and confusion through two core mechanisms:

- Efficient Information Compression: Processes like

Reflexiondon't stuff a lengthy failure log directly into memory; instead, they distill it into a high-information-density "lesson learned" (e.g., "Experience: Forbidden City tickets require one-week advance booking"). This is a lossy but efficient compression that preserves the most critical decision-making information at a minimal Token cost. - Precise Selective Injection: Before every

Thinkstep, the process acts like a top surgical nurse, precisely judging, "Which historical lesson does the LLM 'chief surgeon' need right now? Which observation from a tool is most relevant?" It then combines this information into a clean, efficient "data packet" and presents it to the LLM for processing.

Therefore, the focus of our work is not to "stack" an ever-longer context, but to design an intelligent process capable of dynamically building, pruning, and managing the context.

As developers, we are undergoing a profound role change. Our core job is no longer "Prompt Engineer" but "Agent Workflow Architect." Our core value is embodied in the thinking structure, memory mechanism, and world interaction paradigm we design for the AI.

You only truly step through the door into the Agent era when you stop agonizing over writing a prompt that makes the AI look brilliant and start designing a workflow capable of self-planning, self-correction, and self-iteration.

0x04 Returning to the Essence: Why Is the Agent Effective?

We've explained what an Agent is and how it works from a practical standpoint. But a core question remains: Why is the Think -> Act -> Observe loop fundamentally effective?

To answer this, we need to temporarily step outside the realm of AI. Its scientific foundation rests on two classical theories:

- Cybernetics: This explains how systems achieve goals through feedback, embodying the Agent's process of "approaching" a solution.

- Information Theory: This explains the relationship between information and uncertainty, embodying the Agent's process of "exploring" the problem space.

The Cybernetics Perspective: The Evolution from Open-Loop to Closed-Loop

To understand the Agent's effectiveness, we must first distinguish between two fundamentally different types of systems: the Open-loop System and the Closed-loop System.

A typical open-loop system is an old-fashioned heater with only a timer. You set it to "run for one hour," expecting it to warm the room. But it has no ability to sense the "current room temperature." Thus, its action is blind:

- If it's a sunny day, the room might become unbearably hot after an hour.

- If a cold snap hits, the room might remain frigid after an hour.

The fundamental flaw of the open-loop system is the lack of feedback. It can only execute commands unilaterally, knowing nothing about the result of the execution, and therefore cannot self-adjust based on actual conditions. This is precisely the operational mode of a standard Chatbot—it receives an instruction and generates a result one-shot but cannot verify if that result truly solves the problem.

A closed-loop system solves this problem by introducing a feedback mechanism. Let's look at a classic closed-loop example—the refrigerator.

The refrigerator's core task is to "maintain the fridge compartment at a constant 5°C." It doesn't rely on "guessing" or a fixed schedule but employs a reliable feedback mechanism, which is the embodiment of Cybernetics:

- Set Point: The "5°C" set by the user.

- Sensor: The internal thermometer, which continuously Observes the current actual temperature.

- Controller: The thermostat chip, whose sole question is to Think "Is there a deviation between the current and target temperature?"

- Actuator: The compressor. As soon as the Controller detects a deviation (e.g., temperature rises to 6°C), it commands the compressor to Act.

- Feedback Loop: The compressor's work lowers the temperature; the Sensor feeds the new temperature back to the Controller; the Controller finds the deviation gone (back to 5°C), and commands the Actuator to stop.

We can now clearly see that the Agent's workflow corresponds one-to-one with this closed-loop system. It is essentially a software form of a closed-loop control system:

- Set Point: The user's instruction

- Sensor: The

Observestep, which gets the result returned by the tool - Controller: The

Thinkstep, where the LLM performs reasoning and planning - Actuator: The

Actstep, which calls the tool - Feedback Loop: The result of

Observeis passed as input to the nextThinkcycle

The Observe step is the system's "sensor." Its existence is the key to the Agent's evolution from open-loop to closed-loop. It grants the Agent the ability to sense the "result of the action," allowing it to continuously and stably approach and ultimately achieve the goal through an ongoing "Act-Observe-Correct" cycle in a dynamically changing environment, much like a refrigerator.

The Information Theory Perspective: Entropy Reduction in the "Fog of War"

If Cybernetics explains how the Agent "approaches" a goal, Information Theory reveals what it's "doing" when exploring an unknown problem.

We need to understand a core concept here: Entropy. Information theory defines entropy as the measure of uncertainty. The greater the information content of a system, the smaller its uncertainty, and the lower its entropy value. All problem-solving processes can essentially be viewed as a process of reducing uncertainty (i.e., "Entropy Reduction") by acquiring information.

This concept might sound abstract, but we can understand it with a very intuitive example—dispersing the "Fog of War" in games like StarCraft or League of Legends.

At the start of the game, the entire map is unknown except for the small area around your base. This is referred to as a "High Entropy" state in information theory—the system is full of extreme uncertainty. Your mission (destroying the enemy base) is clear, but the path to it is entirely unknown.

Every move you make is an information-seeking process:

- You send a scouting unit to a dark area (Act).

- The unit's vision lights up a part of the map, allowing you to see terrain, resources, or enemy presence (Observe).

- The result of this "Observation" is information. It changes your knowledge of that area from "completely uncertain" to "certain."

This process of "using information to eliminate uncertainty" is "Entropy Reduction." Claude Shannon, the father of information theory, provided the mathematical definition for "Information Entropy":

The mathematical details of this formula are secondary, but the core idea is critical: the more possibilities a system has, and the more evenly distributed they are, the higher its uncertainty (entropy). The value of acquiring information lies in its ability to rule out some possibilities, thereby reducing the system's total entropy.

The Agent's work is precisely a rigorous entropy-reduction effort in an abstract "problem space." Every Act-Observe loop is a scientific experiment designed to acquire the information that will maximize the reduction of problem uncertainty. When uncertainty is fully eliminated, the unique path to the answer clearly emerges.

In summary, the Agent's effectiveness is not accidental. Cybernetics provides it with goal-oriented correction capability, ensuring it "stays on track" during complex execution paths; while Information Theory defines its core mission in exploring unknown space—systematically eliminating uncertainty by acquiring information through action.

These two theories together provide a solid scientific foundation for the reliability and effectiveness of the Agent framework.

0x05 Conclusion: Your New Role—From "Prompt Engineer" to "Agent Workflow Architect"

Starting from the regret of the "re-take exam," we followed "top student Xiao Ming's" steps, witnessing the progressive evolution of the problem-solving process; through the sharp contrast in "travel planning," we personally experienced the fundamental difference between a dynamic process and static generation; we deeply deconstructed the triple value of the process as the core driver—using structure against chaos, iteration against forgetting, and interaction against vaporware; finally, from the perspectives of Cybernetics and Information Theory, we found the scientific bedrock for the effectiveness of this workflow.

The conclusion is self-evident: The core competitiveness in the future of LLM applications is no longer the size of the model's parameters or the cleverness of a single prompt, but the quality of the intelligent workflow we design around the model.

This is not just a cognitive shift but a profound evolution of professional roles.

Defining Your New Role: Agent Workflow Architect

The "Prompt Engineer"—a transient role born in the wild west of the LLM era—is quickly becoming history. As the industry crosses the shallow waters of "cleverly talking to the AI," the true battleground for developers has already moved. Our job is no longer to be "conversationalists" but "system designers."

Welcome to your new role: Intelligent Process Architect.

The core responsibility of this role is not to polish language but to build systems. Specifically, it involves three cores:

- Designing the AI's Cognitive Workflow

You define how the AI plans, decomposes, reasons, and reflects. You are designing the AI's "cognitive kernel" and "operational charter," not just a prompt. - Empowering the AI's Toolbox

You forge the AI's "hands and feet" to interact with the physical and digital world. You need to keenly identify information bottlenecks in the workflow and create or integrate suitable tools to extend the AI's capability boundary. - Building the AI's Decision Context Architecture

You are no longer a context "loader" but an "architect." An excellent process is naturally a master of context management. Through ingenious process design, you ensure that at every decision moment, the AI's "attention" is neither too much nor too little, but perfectly focused on the most valuable information.

Stepping into the Agent Era: From Implementing the "Heartbeat" to Building the "Brain"

We are moving towards an exciting future: we will create not "machines that answer questions" but "partners that solve problems."

But before that, we must establish a crucial realization: the Think - Act - Observe loop we know today is not the end of Agent design. It is more like the Agent's "heartbeat"—the most fundamental, core atomic rhythm (Agentic Loop) that sustains the intelligent entity's interaction with the world.

Our core job as architects is not to replace this heartbeat but to design a powerful "brain" and "nervous system" for it. However, this powerful "slow thinking" process, while enhancing result quality, also introduces a new, unavoidable engineering challenge—execution efficiency.

How do we mitigate the latency caused by this "slowness," allowing the Agent to achieve high performance while pursuing high quality? This is the first practical problem the "Agent Workflow Architect" must face: Agent Performance Engineering. Frontier engineering practices are seeking breakthroughs in the following key areas:

- Architectural Pruning and Selection: Not all tasks require a complex

ReActloop. The industry finds that for scenarios solvable in one or two direct steps, using the LLM's built-in Tool-Calling paradigm is more efficient. This type of Agent bypasses the explicit "Thought" text generation, directly outputting structured function call instructions, which significantly reduces token consumption and end-to-end latency. This is the first level of performance optimization an architect makes when designing a workflow. - Parallel Execution: This is the most significant speed boost. When an Agent's plan includes multiple independent subtasks (e.g., "Check Beijing weather" and "Search for popular restaurants"), modern Agent frameworks like LangChain support Parallel Tool Calling. It uses asynchronous I/O (

asyncio) to concurrently dispatch multiple API requests that would otherwise wait in series, shortening the total time from "the sum of all task times" to "the longest task time," drastically improving execution efficiency. - Model Specialization and Routing: The "one-size-fits-all" single-model strategy is being phased out. A better practice is to adopt a mixed-model strategy. For example, use a lightweight, fast model (like gemini-2.5-flash, claude-haiku-4.5) to handle high-frequency, low-complexity tasks like "planning," "routing," or simple tool selection within the workflow. Only for complex nodes requiring deep reasoning should the heavyweight, high-cost core model (like gpt-5-pro, gemini-2.5-pro) be called. This tiered strategy completes most workflow steps at a lower cost and latency.

- Efficient Memory Architecture: The Agent's performance bottleneck often lies in its interaction with memory. The LLM's limited context window means we cannot stuff all historical information into the prompt. Therefore, designing an efficient "memory retrieval" mechanism is paramount. This is not just technology selection (like using a vector database) but strategy design: how to compress, distill, and structurally store conversation history, past action traces, successful experiences, and lessons from failures. An excellent memory architecture, like a top-tier librarian, can accurately and quickly extract "the one piece of knowledge most needed at this moment" from vast information when the Agent requires it, injecting it into the context at the lowest Token cost. This directly determines the Agent's ability to make correct decisions quickly, avoiding getting lost in redundant information, thereby fundamentally boosting its operational efficiency and intelligence level.

Once we've equipped the Agent's 'heartbeat' with an 'accelerator' through refined engineering practice, we can truly begin building the more macro, more intelligent "Brain"—a Cognitive Architecture capable of commanding this heartbeat.

Being satisfied with implementing a basic loop is like being satisfied with a heart beating but not building a body that can think and collaborate. The real value creation happens on top of these fundamental rhythms. Current cutting-edge exploration is focusing on the following directions:

1. Cognitive Orchestration Hub: Intelligent Workflow Orchestration

A mature Agent should act like an experienced project manager, autonomously planning and orchestrating the optimal execution workflow for different tasks. This is the core idea of "dynamic workflow orchestration," and Anthropic's newly released "Skills" feature is the best industry practice for this idea. It allows developers to define a rich set of "skills" (i.e., tools), and the model autonomously understands the user's macro intention, acting like a conductor to intelligently select, combine, and sequentially call multiple "skills" to collaboratively complete a complex goal. This represents the evolution of the Agent's Think step from tactical decisions like "what to do next" to strategic planning like "how to achieve the final goal step-by-step."

2. From "Single Soldier" to "Team": Spec-Driven Layered Architecture

For complex tasks that exceed the capacity of a single Agent, layering and decomposition are inevitable. The key is ensuring efficient and reliable team collaboration. The answer is moving from "impromptu role-playing" to a more serious engineering paradigm—"Specification-Driven." In this architecture, the primary task of a "Planning Agent" is to generate a detailed, clear Specification, which acts as a blueprint, becoming the sole contract for all downstream "Execution Agents." This is the core idea explored by frontier projects represented by AI IDE Kiro and the open-source SpecKit package, marking the evolution of Agent collaboration into verifiable "modern software engineering."

3. From "Using Tools" to "Creating Tools": On-the-fly Code Generation

Traditional tool use is about the Agent making a selection from a fixed toolbox. The future direction is for the Agent to create the tool itself. Research like CodeAct (CodeAct: A Multi-Turn Code Agent with In-Context Learning) is leading this trend. When facing a problem with no ready-made tool, this type of Agent will dynamically generate a block of Python code (a micro-tool), execute it in an isolated environment, and use the execution result to advance the task. This evolves the Act step from a simple "API call" to a "code generation and execution" with infinite possibilities, thereby achieving the dynamic expansion of the Agent's capability boundary.

These are the most exciting jobs for an "Agent Workflow Architect" in the coming years. We are no longer the implementers of the basic "heartbeat" but the "creators" of these advanced cognitive architectures.

So, starting today, forget the clever tricks of prompting. Go draw the first flowchart for the task at hand. That is the beginning of becoming an Agent Workflow Architect.

References and Further Reading Resources

Part 1: Core Academic Papers

- Chain of Thought (CoT)

- Title: Chain-of-Thought Prompting Elicits Reasoning in Large Language Models

- Link:

https://arxiv.org/abs/2201.11903 - Summary: The first systematic proof that adding "thought process" examples to the prompt can elicit the Large Language Model's ability to solve complex reasoning tasks, a foundational work for structured prompting.

- Tree of Thoughts (ToT)

- Title: Tree of Thoughts: Deliberate Problem Solving with Large Language Models

- Link:

https://arxiv.org/abs/2305.10601 - Summary: Extends the linear Chain of Thought into a multi-path tree structure. It allows the Agent to explore multiple different reasoning paths at a single thought node and use self-evaluation to determine the optimal solution, a more powerful structured thinking process.

- Reflexion Framework

- Title: Reflexion: Language Agents with Verbal Reinforcement Learning

- Link:

https://arxiv.org/abs/2303.11366 - Summary: The first to formalize and automate the concept of "self-reflection/review." It proves that an Agent can iteratively optimize its behavior by performing "verbal reflection" on past failures without retraining the model.

- ReAct Framework

- Title: ReAct: Synergizing Reasoning and Acting in Language Models

- Link:

https://arxiv.org/abs/2210.03629 - Summary: Proposed the milestone

Thought -> Act -> Observeframework, deeply interleaving "Reasoning" and "Acting," the theoretical cornerstone for modern Agent frameworks interacting with the external world.

- CodeAct Framework

- Title: CodeAct: A Multi-Turn Code Agent with In-Context Learning

- Link:

https://arxiv.org/abs/2402.01030 - Summary: Eloquently proves that the Agent's

Actstep can evolve from "calling predefined tools" to "generating and executing code on the fly," vastly expanding the Agent's capability boundary.

Part 2: Industry Material and Practice Resources

- Foundational Survey: Lilian Weng's 'LLM-powered Autonomous Agents'

- Link:

https://lilianweng.github.io/posts/2023-06-23-agent/ - Summary: The most widely cited Agent survey article in the industry, written by OpenAI's Applied Research Head, is the essential first step for building a global cognitive map of the field.

- Core Idea: "The LLM Operating System" by Andrej Karpathy

- Summary: Andrej Karpathy (OpenAI Founding Member) has proposed in multiple forward-looking talks that the LLM is the "CPU" of a new computing paradigm, and the Agent framework acts as the "Operating System (OS)."

- Mainstream Development Frameworks: LangGraph & LlamaIndex

- Links:

https://www.langchain.com/langgraphandhttps://www.llamaindex.ai/ - Summary: The two de-facto standard libraries for building Agent applications today. LangGraph focuses on Process (Chain & Agent), and LlamaIndex focuses on Data (RAG), making them the top choice for developers turning theory into practice.

Frontier Architecture Exploration

- Specification-Driven Collaboration

- Summary: The core of solving Multi-Agent collaboration is establishing a machine-readable "contract" or "Specification." Representative projects in this direction include AI IDE Kiro (

https://kiro.dev/) and the open-source toolkit SpecKit (https://github.com/braid-work/spec-kit).

- Intelligent Tool Orchestration

- Summary: A powerful Agent should be able to autonomously plan and call a sequence of tools to achieve a complex goal. Anthropic's "Skills" feature (

https://www.anthropic.com/news/skills) elevates the Agent's tool-use capability from "single call" to "intelligent orchestration," representing the best industry practice in this area.

- Social Behavior Emergence: Stanford's "Generative Agents"

- Paper: Generative Agents: Interactive Simulacra of Human Behavior (

https://arxiv.org/abs/2304.03442) - Summary: A phenomenal AI experiment demonstrating how credible spontaneous behaviors can emerge in a virtual society when Agents are equipped with memory and reflection capabilities, an excellent extended reading for Multi-Agent systems exploration.